AI Is a Mirror, Not a Machine

A few weeks ago I sat back from my screen and just stared at it.

I'd spent over an hour documenting a data cleaning process in Markdown → every step, every decision, every if/then rule. Painful, tedious, the kind of work that makes you question your career choices. Then I handed those instructions to a Claude Skill, watched it build itself, test itself, and within 30 minutes I had a tool that could execute roughly 50 sequential steps: clean messy data, generate multiple filtered tables across tabs, and run analysis on the output.

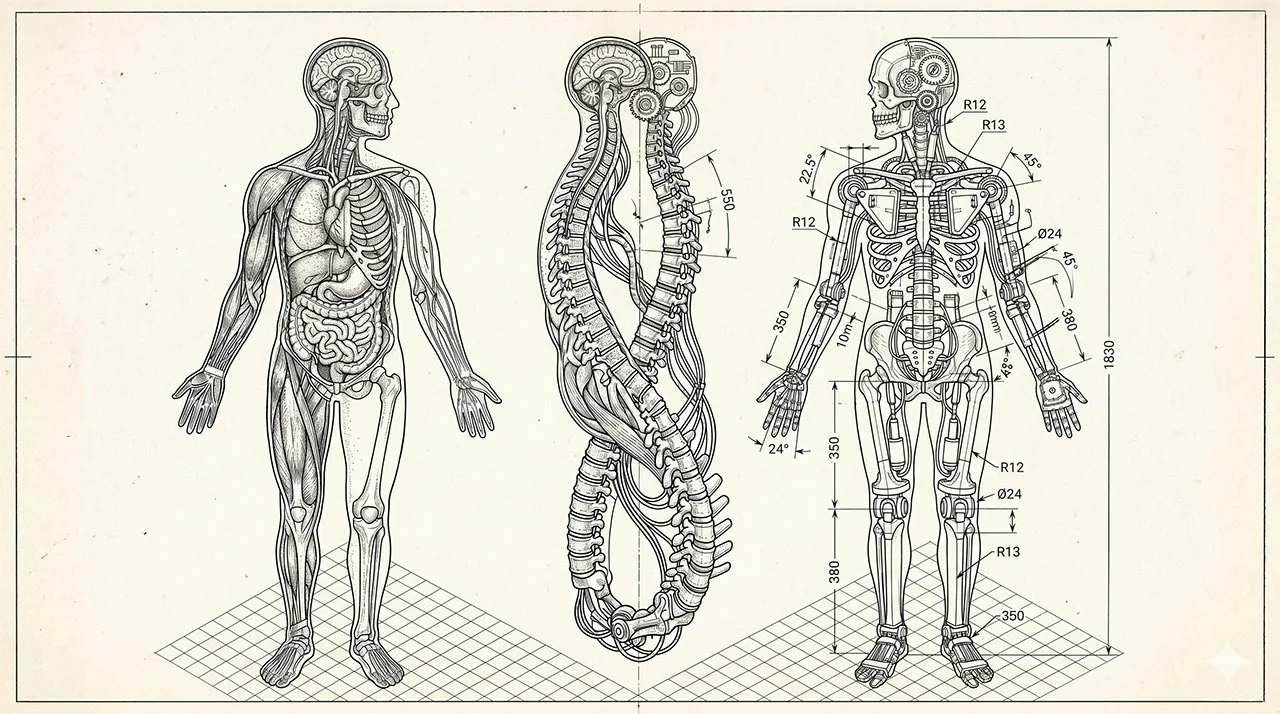

It was a sci-fi moment. Not because of what the technology did, but because I recognized the output. It looked like my work. It thought like I think. It was me, running faster than I can run.

That's when something clicked that I hadn't fully articulated before.

The Levers I Didn't Know Existed

A year ago, I understood AI in broad strokes. I knew about orchestrator agents and supervisor models. I knew the big platforms (GPT, Claude, Gemini, Copilot) and I used them regularly.

What I didn't understand was the depth of nuance beneath the surface.

Agents. Projects. Skills. Visual models. Extended thinking. Each one is a different lever, tuned for different problems. Twelve months ago those distinctions were invisible to me. I was treating AI like a single tool when it's actually a toolbox; and a growing one.

The industry didn't help. For a while it felt like every platform was racing toward a universal general model. One tool to do everything. The nuance got buried under the hype.

What I've learned since is that the nuance is where the real value lives.

The Hour That Made It Work

Back to the data Skill.

The build itself took 30 minutes. But the hour before it, the documentation hour, that's where the work actually happened.

When you teach a person a process, you get help you didn't know you were getting. They watch your screen. They ask questions. They catch your mouse moving toward a button before you explain why. There are a thousand non-verbal cues filling in the gaps of your instructions.

Text has none of that.

When I sat down to document those 50 steps, I couldn't rely on context clues or body language or a follow-up Teams message to clean up my ambiguity. I had to be precise in a way I've never had to be precise when teaching a human. Every exception needed to be named. Every decision tree had to be explicit. Every step that "everyone just knows" had to be written out.

What surprised me was what that process revealed. I discovered things about my own workflow I hadn't consciously registered; assumptions I'd been making for years that only became visible when I was forced to write them down. The discipline of writing for AI made me understand my own process better.

Teaching a machine teaches you something about yourself.

You're the Problem (And the Solution)

If you tried to build something like this and it didn't work, I'd bet on one of two failure modes.

The first is impatience. People spend 10 minutes writing instructions, get mediocre output, and conclude the tool isn't capable. The tool is capable. The instructions weren't good enough.

The second is a philosophical gap. And this one is harder to close.

Most people treat AI as an external tool, something separate from them that produces output on command. That's technically accurate but practically limiting. The better mental model is that AI mirrors you. It can only reflect what you give it. Vague input produces vague output. Shallow teaching produces shallow results. But when you put in the real work → the hour of Markdown, the detailed instructions, the genuine encoding of how you think, and what comes back is a digital embodiment of your expertise.

That's not a metaphor. It's how it works.

The Skill I built cleans data as well as a junior analyst and analyzes it as well as a senior one. Not because the AI is generally intelligent. Because I encoded 17 years of experience into the instructions and the AI runs those instructions faster than I ever could.

What This Means Practically

You don't need to build a 50-step Skill to feel this. Start smaller.

Take one task you do every week. Open a document and write down every step → not a summary, every step. Write it as if you're teaching someone who has never seen your work before and has no way to ask a follow-up question. That document becomes your prompt.

When you run it through AI and the output looks right, you'll understand what I mean about the mirror. When it looks wrong, go back to the documentation. The gap in the output almost always points back to a gap in the instructions.

The goal isn't to hand work to AI. The goal is to encode yourself into AI and then run.

AI in its current state is most powerful when it amplifies expertise, not when it replaces effort.

One More Thing

There's something quietly strange about this process that I think is worth naming.

When the Skill produced its first clean output, it felt like watching a version of me work. Same logic. Same priorities. Same sequence. But faster, and without the parts of the job that drain energy.

It's a little weird. It's also genuinely fun in a way that's hard to explain to people who haven't experienced it.

Build the mirror. See what reflects back.