Before You Build the AI, Build the Brain

A teammate said something recently that stopped me mid-conversation.

We were deep in a platform replacement project. Months of work, a hard deadline, a contract expiring with almost no buffer. We were on a Microsoft Teams call, screensharing our documentation with the vendor. You know the scene: someone sharing their screen, the rest of the group watching files scroll past. They went quiet for a second, and my teammate typed in the chat: "They're treating them like sacred texts."

He wasn't wrong.

What we'd dropped into that shared folder wasn't a quick brief or a requirements doc. It was 42 structured files of every customer care topic, every decision path, every policy reference, every scripted response, all documented, validated by subject matter experts, and reviewed three times before we touched a single line of platform configuration. Two months of work. A full team effort spanning hundreds of hours and one cross-country working session to get the details right.

It is, without question, the most important thing we've done on this project.

The Tool Isn't the Problem

People don't tell me AI is underperforming. They tell me it doesn't work. That it can't spell strawberry (IYKYK). That it keeps hallucinating. That they tried it and it wasn't worth the investment.

Here's the honest read: companies treat AI like a light switch. Pick a vendor, configure it over a weekend, flip it on, expect results. When the results don't come, they blame the tool.

The tool isn't the problem.

AI is a technology that requires configuring, testing, adopting, and critical thinking. It doesn't arrive knowing your business. It doesn't know your return policy, your reseller exceptions, your escalation paths, or the difference between how your process is documented and how your agents actually work. You have to teach it all of that.

And teaching it means building something first.

Most companies skip the hardest part and then wonder why the easy part didn't work.

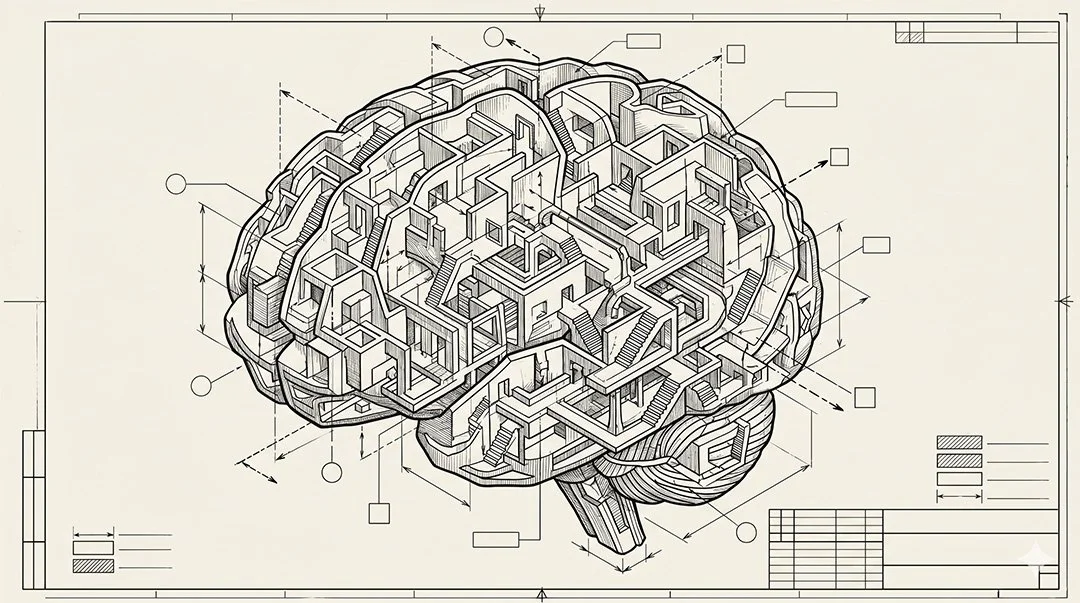

What the AI Brain Actually Is

In our case, the brain is a library of 42 structured files.

Half are workflow specifications: decision logic, process steps, system actions, and scripted responses for every customer interaction type. What does the AI collect when a customer reports a damaged shipment? When does a warranty claim escalate to Tier 2? What happens when a reseller order can't be cancelled? All of it documented, branching logic and all.

The other half are reference articles: policy details, product knowledge, FAQ-style content the AI draws on to answer questions directly without involving a human agent.

Every file went through a source hierarchy. We had to figure out what was actually true before we could document it. The existing platform's workflows were inconsistent with how agents actually operated. KB articles hadn't been touched in months. Processes lived in people's heads. We went upstream, to the people doing the work, and rebuilt from there.

Three review cycles. Multiple SMEs. One cross-country working session.

That's what it took to get to 42 files we trusted.

Build Once, Deploy Everywhere

Here's where it gets interesting.

Those 42 files aren't feeding one system. They're feeding four:

Customer-facing AI — the new platform handling chat, email, and voice interactions

Agent-facing AI — an internal system that surfaces suggested actions and knowledge to human agents in real time

Website FAQs — customer-facing help content drawn from the same source material

Training documentation — onboarding material for new hires and refreshers for the existing team

One knowledge layer. Four deployment targets.

That's the multiplier effect that rarely gets mentioned when people pitch AI projects. The two months of work doesn't just configure a chatbot. It becomes institutional knowledge: structured, validated, and reusable across every system that needs to understand how your business operates.

The platform is just the delivery mechanism. The knowledge layer is the real project.

This Isn't Just a Customer Care Problem

We built this for customer support, but the principle applies everywhere.

Before you build an AI analytics agent, you need structured data definitions: what does "market share" mean in your business, how is it calculated, what are the exceptions. Before you build a marketing AI, you need documented brand voice, campaign logic, and audience rules.

I built a Claude Skill recently that follows a 50-plus step process. The only way I could build it was to document every single step first, one by one, before writing a line of instructions. The documentation wasn't prep work for the build. It was the build.

Before you build anything, you need to answer one question: what does this AI need to know, and do I trust that what I'm giving it is accurate?

The 10-80-10 Model applies here, but at scale. The knowledge build is the first 10%. Except when the scope is large enough, that 10% takes two months and a team.

Most people want to skip to the 80%. That's the interesting part. That's where AI does the work.

But if the 10% is wrong, the 80% produces garbage. No amount of prompt engineering fixes a broken knowledge layer.

AI doesn't fail at the platform level. It fails at the foundation.

Where to Start

If you're planning an AI project, any AI project, start by asking three questions before you open a single vendor proposal.

1. What does this AI need to know? Make a list. Be specific. Don't stop at "our policies." Name every policy, every exception, every edge case that a human currently handles from memory.

2. Do I trust my source material? Most organizations have multiple versions of the truth. A SharePoint doc that hasn't been updated. A KB article that reflects old policy. A process that changed six months ago but never got documented. Find the gaps before the AI does.

3. Who has to validate this? The people who manage a process rarely know it as well as the people who execute it. Get your SMEs involved. Build review cycles into the timeline. What you discover in validation will surprise you. In our case, we found workflows that hadn't been updated in years and were missing entire authorized resellers from our documentation. Not because anyone was careless — because that's what happens when processes live in people's heads instead of structured files. The AI would have inherited every one of those gaps on day one.

The work is tedious. It's not the part anyone puts in a case study. There are no posts on LinkedIn about documentation sprints and SME review cycles.

But when a vendor screenshares your files and a teammate types "they're treating them like sacred texts" in the chat, you know the foundation is solid.

Everything else gets built on top of that.